AI Development

The GRACE Framework: An AI-Augmented Development Model for Controlled Software Delivery

April 22, 2026

BLOG

Why Poor Healthcare UX Is Driving Clinician Burnout

Poor healthcare UX increases clinician burnout by adding workflow friction, documentation burden, and cognitive load. Inefficient systems force clinicians to spend more time on software than patients. Improving healthcare software usability by aligning with real workflows reduces friction, improves efficiency, and supports better care delivery.

AI has made code generation fast and accessible, but development systems have not evolved at the same pace. Code is still being produced, reviewed, and deployed under assumptions that no longer hold.

AI-assisted development has been shown to increase productivity by 31.4%, but also introduces 23.7% more security vulnerabilities.

The issue is not the tools. It is that the systems used to build, review, and ship software have not adapted to this new reality.

This article introduces an AI-augmented development model designed to maintain control over ownership, review, data exposure, system consistency, and traceability. We will break down the GRACE framework and explain how each component addresses a specific failure point in modern development workflows.

Why AI Development Requires a Different Operating Model

AI has changed how software is produced, not just how fast it is delivered. The underlying assumptions of development no longer hold when code is generated instead of written.

Code Generation is No Longer the Constraint

AI agents can now produce full implementations in a single step. What once took hours or days can now be generated in minutes. This shift removes the traditional bottleneck in software development.

Development Assumptions No Longer Hold

Development systems were built around how code used to be written. Code was created line by line, with clear intent and understanding behind each change.

That is no longer the case, especially since the introduction of vibe coding. Code is now generated in blocks, often based on prompts rather than deliberate design. As a result, both volume and variability increase. The same problem can produce different outputs depending on how it is described.

Existing Workflows Were Not Designed For This

These changes expose a mismatch. Most workflows still assume that developers fully understand the code they ship and that changes are incremental.

With AI, neither assumption is reliable. Review processes struggle to keep up with the volume of generated code. Ownership becomes unclear when no single developer authored the logic. Control weakens as variability spreads across the system.

AI changes the nature of development. The system around it must change as well.

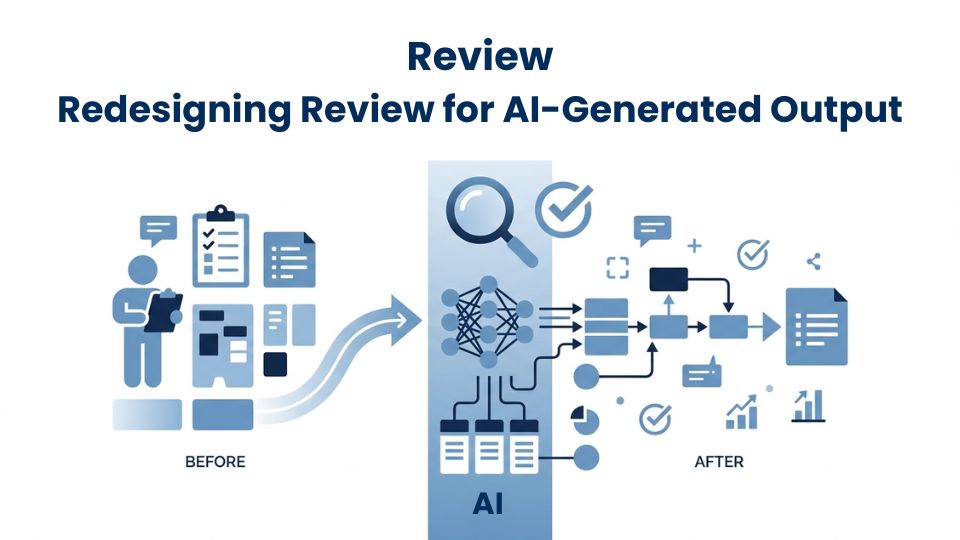

The GRACE Framework: Structuring AI-Augmented Development

AI changes how code is produced. The GRACE framework defines how that code is handled within a development system. It establishes the conditions required to build, review, and ship software when AI is part of the workflow.

The framework is based on five control points where development systems tend to break when AI is introduced. These are not abstract concerns. They reflect how ownership, review, data handling, system structure, and traceability behave under AI-generated output.

Grasp: Establishing Ownership Over Generated Code

What changes with AI

Code is no longer written in a linear, intentional way. It is generated in response to prompts. This removes the direct link between the developer and the logic being produced.

Where systems break

When no one has full ownership of the code, responsibility becomes unclear. Developers may run and deploy generated output without fully understanding it. This makes debugging slower and increases the risk of incorrect assumptions during incidents.

What the model requires

Ownership must be explicit. Every generated output needs a responsible developer who understands and stands behind it. This assignment happens at the point of generation, not after issues appear.

Review: Redesigning Review for AI-Generated Output

What changes with AI

AI increases both the volume and variability of code. Outputs can differ significantly even for similar problems, depending on how they are prompted.

Where systems break

Traditional reviews focus on whether the code works. They do not account for how well the output fits within the system. As a result, structural issues and inconsistencies are often missed.

What the model requires

Review must extend beyond correctness. It needs to evaluate alignment with system design, security posture, and long-term maintainability. This ensures that the generated code fits into the system without introducing hidden issues.

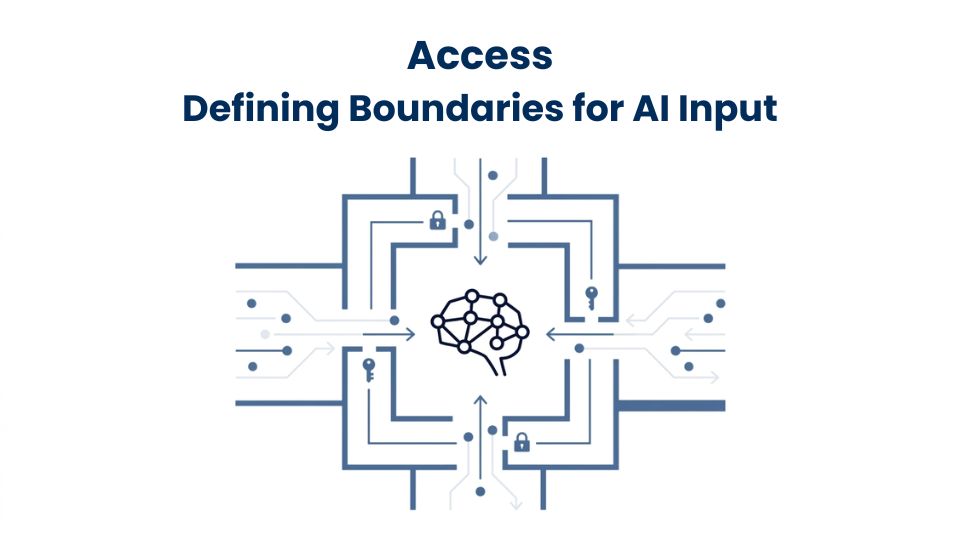

Access: Defining Boundaries for AI Input

What changes with AI

Prompts become inputs to external systems. In many cases, these systems operate outside the organization’s direct control.

Where systems break

Without clear boundaries, sensitive data can be included in prompts. This creates exposure risks that are not visible in the code itself but exist at the input level.

What the model requires

Data access must be controlled before AI is used. Teams need defined rules for what can and cannot be included in prompts. This ensures that inputs are governed before outputs are generated.

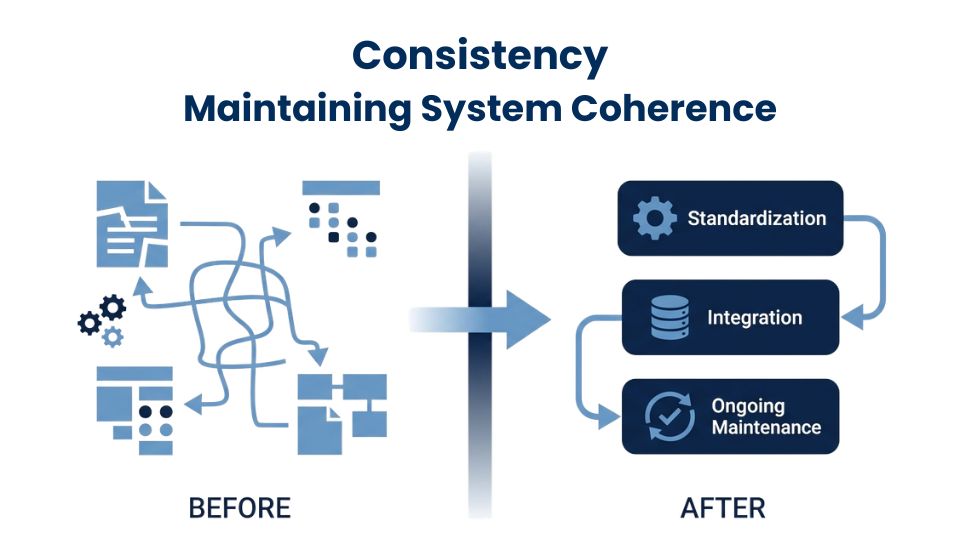

Consistency: Maintaining System Coherence

What changes with AI

AI does not enforce a single way of building. Each developer can prompt differently, structure outputs differently, and arrive at different implementations for the same problem.

Where systems break

This creates divergence inside the same system. Over time, this turns into architectural patchwork. The system loses a coherent identity. Components no longer align, even when they solve similar problems.

As development continues, this inconsistency compounds. Each sprint adds more variation. The cost is not immediate failure, but increasing complexity that becomes harder to manage.

What the model requires

Consistency must be enforced across the system. This includes standardizing prompts, outputs, and design decisions. The goal is to ensure that all generated code aligns with a shared structure.

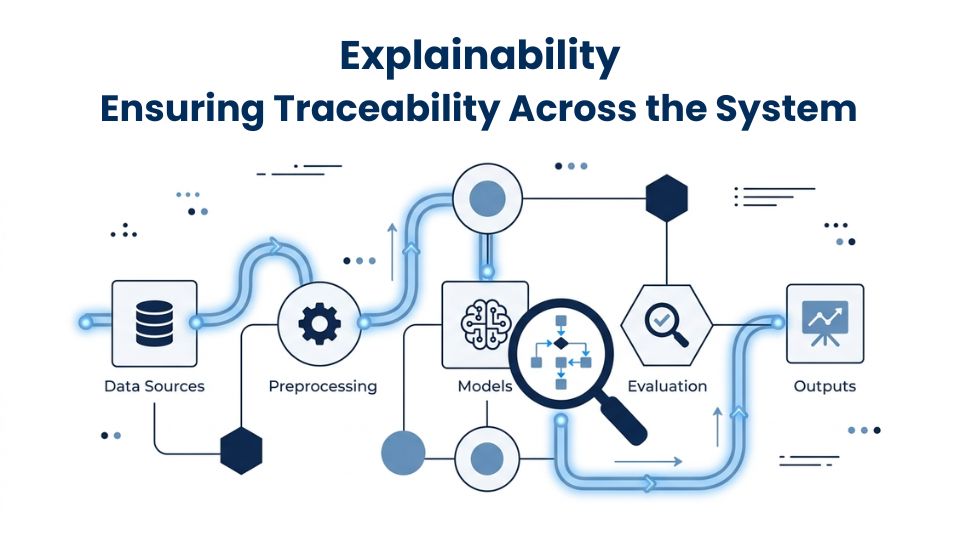

Explainability: Ensuring Traceability Across the System

What changes with AI

The origin of code becomes unclear. In production, it is no longer obvious whether a piece of logic was written by a developer or generated by AI.

Where systems break

This lack of clarity creates a traceability gap. There is no audit trail that explains how the code was produced. When questions arise, teams cannot answer them with certainty. Auditors ask who wrote the code, but a prompt is not a sufficient answer.

This also affects operations. Incident response slows because there is no clear path back to the source of a change. Teams spend time reconstructing what happened instead of resolving the issue.

What the model requires

Traceability must be built into the workflow. Every change needs a clear record of what was generated, who initiated it, which prompt was used, and who reviewed it. This creates a system that can be understood, audited, and managed under real operating conditions.

The GRACE framework defines how development operates when AI is part of the process. It does not slow development down. It ensures that increased speed does not come at the cost of control.

Frequently Asked Questions

Which companies use the GRACE framework in their software development?

A. The GRACE framework is not yet a widely standardized industry model, so adoption is not publicly documented across companies. It represents a structured approach to AI-augmented development. At MatrixTribe we apply this model in its development workflows to ensure control over ownership, review, data access, consistency, and traceability.

Where can I find official documentation for the GRACE framework?

A. There is no centralized or widely published documentation for the GRACE framework. It is not an industry-standard framework with formal governing bodies. Its structure and application are typically shared through practical implementations, presentations, and company-specific content, including how MatrixTribe applies it in AI-augmented development.

What is the GRACE framework and its core components?

A. The GRACE framework is an AI-augmented development model that defines how software should be built when AI is part of the workflow. Its core components are Grasp (ownership), Review (evaluation), Access (data control), Consistency (system alignment), and Explainability (traceability), each addressing a key point where development systems can break.

CONCLUSION

AI is now part of how software is built. It has changed how quickly code can be produced, but it has also changed how systems behave under that speed.

Development workflows that assume intentional authorship, incremental change, and full visibility can no longer support this environment. The result is not just faster delivery. It is increased ambiguity across ownership, review, data handling, system structure, and traceability.

The GRACE framework defines how development must operate when AI is involved. It establishes clear ownership, structured review, controlled inputs, system consistency, and full traceability. These are not optional layers. They are required conditions for building systems that can be understood, maintained, and trusted.

AI will continue to accelerate development. That shift is already underway. The question is whether the systems around it are designed to handle that change. Without structure, speed introduces risk. With the right model, it becomes an advantage.

Design AI Development Systems That Scale Without Losing Control

If your team is adopting AI but your development workflows have not evolved with it, the risk is already in your system. At MatrixTribe our development workflows are structured around the GRACE framework. This allows our team to move at speed while maintaining control over ownership, review, data access, consistency, and traceability.

Schedule a call with us to structure your AI development systems without losing control.

Latest Article